The Potential and Limitations of China’s PLA’s Use of ChatGPT

The People’s Liberation Army of China is eager to be the first to reap the benefits of the revolutionary military applications of generative artificial intelligence. However, the PLAN faces political, economic, and scientific hurdles, some of which are shared by U.S. artificial intelligence developers.

According to a new paper published on August 21 by an analyst at Air University’s China Aerospace Studies Institute, Josh Baughman, “Overall, China understands the need to be a first mover (or close follower) in generative AI on the battlefield to firmly grasp the strategic initiative of intelligent warfare and seize the commanding heights of future military competition.”

Baughman, however, pointed out that much like their American counterparts, Chinese policymakers are hesitant to fully adopt the technology without first doing extensive testing.

Baughman told Air & Space Forces Magazine, “When we talk about generative AI in a military application, people’s lives are on the line depending on how we apply it.” There’s a lot on the line, therefore trust is essential.

“that can generate high-quality text, images, and other content based on the data they were trained on,” as defined by IBM, is what is meant by “generative artificial intelligence.” ChatGPT, a chatbot that can produce poetry, college essays, song lyrics, and other creative material, is perhaps the most renowned implementation of generative AI.

Experts in military strategy, such as Air Force Secretary Frank Kendall, believe the technology has the potential to aid in mission completion and decision making on the battlefield; nevertheless, it may be some time before such systems can be depended upon in combat.

There seems to be consensus among China’s PLA: Baughman identified a number of PLA media outlets that all agreed AI will have a role in future conflicts and might have a decisive impact in seven distinct domains:

Interaction between humans and machines: generative AI’s dual language comprehension capabilities mean it might speed up the analysis of massive data sets. Baughman cited a prediction by the PLA that a software similar to ChatGPT will evolve into a collaborative combat system able to organize missions, designate goals, and attack targets.

Decision-making: Generative AI’s ability to quickly digest vast volumes of data might speed up the process of selecting the most effective military action plan and pave the way for decentralized leadership of dispersed forces.

According to Baughman’s writing on PLA media, “design, write, and execute malicious code, build bots and websites to trick users into sharing their information, and launch highly targeted social engineering scams and phishing campaigns” might all be aided by generative AI in both offensive and defensive network warfare. In the future, these weapons of mass destruction might grow so advanced that only AI defenses would be effective.

The cognitive domain is where PLA media outlets propose employing generative AI to “efficiently generate massive amounts of fake news, fake pictures, and even fake videos to confuse the public,” as Baughman put it.

Generative AI has the potential to improve logistics by speeding up the process of allocating resources, managing warehouses, planning supply routes, and spotting inefficiencies. Material demand forecasting and resource allocation planning might potentially benefit from its use.

In the space sector, where satellites travel at many times the speed of sound, generative AI might be used to keep tabs on their well-being. Engineers might use this information to create better rockets and spaceships.

As for training, the PLA is lacking in actual combat experience, but generative AI, in conjunction with previous training data and new intelligence, might help “quickly build combat simulation through simple human language descriptions,” as PLA authors put it.

Challenges

Despite the various potential uses, Baughman notes that building generative AI for military reasons in China presents a number of problems, some of which are specific to the country but many of which are shared by developers elsewhere.

Generative artificial intelligence (AI) relies on massive volumes of data, yet the Chinese Communist Party has made certain data off bounds. Article four of the party’s rules for generative AI states that such programs “must not generate content that incites subversion of State power and must adhere to the socialist core values.”

Reference to the restriction of information regarding the 1989 Tiananmen Square demonstrations, Baughman’s research cites a Chinese CEO joking that Chinese big language models cannot count to 10 since it would contain the digits eight and nine. It’s possible that not all military applications might benefit from generative AI, but information limits may hinder its progress in certain areas.

Baughman said, “There is a party problem,” but he argued that “pure engineering or technical applications” wouldn’t be affected.

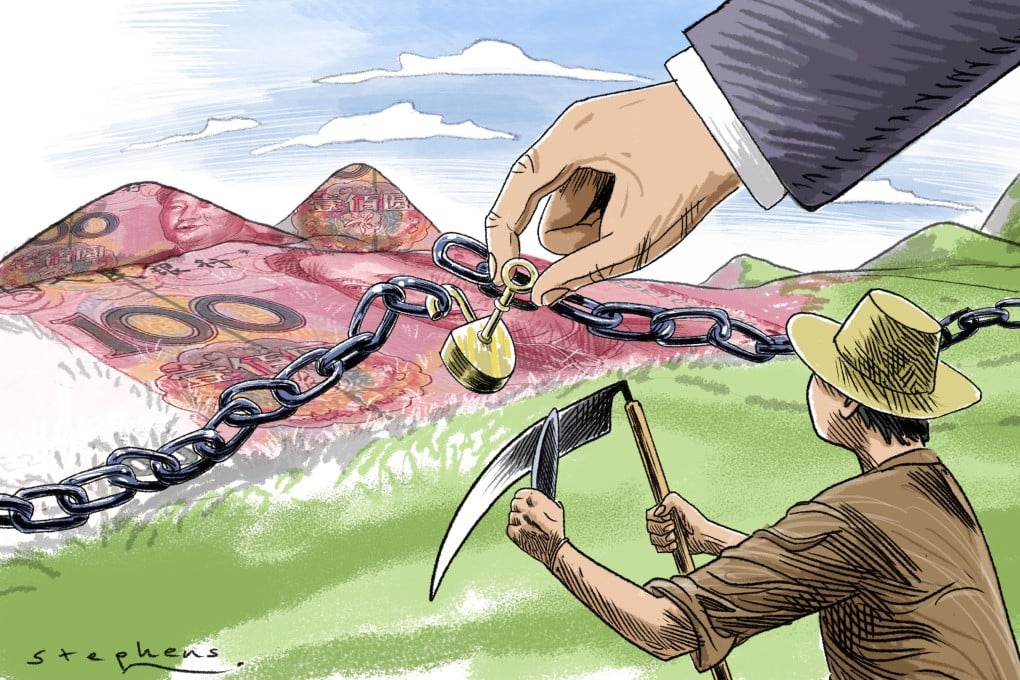

Shortage of silicon: Generative AI depends on a huge amount of computational power, and big computers need big processors. Sanctions imposed by the United States reduce the availability of Chinese chips, but these restrictions are difficult to enforce, and China has been working for some time to develop its own semiconductor infrastructure.

Corruption: The Chinese government is spending a lot of money on artificial intelligence, but much of it goes to companies with more political ties than technical skill, according to a May report by AI researcher Gregory Allen of the Center for Strategic and International Studies. Allen warned that if the United States imposes sanctions that eliminate competition from exporters, the situation might worsen.

While both Chinese and American AI engineers struggle with this problem, the PLA may feel it more keenly owing to a lack of real-world combat experience, as noted by Baughman in his discussion of data sets.

Data has to be correctly labelled, altered, and analyzed for optimization to take place. The “availability and interpretability of the data are poor,” according to Baughman’s quoted PLA media stories, and there isn’t enough engagement with expert end-users for the approach to be effective in practice.

Having a human in the loop of systems employing AI is emphasized by PLA authors because both U.S. and Chinese authorities are afraid of losing control of military AI.

Baughman noted, “The PLA most certainly wants to be the first mover on applying a more comprehensive application of Generative AI on the battlefield.” However, the PLA won’t do so until it can have full confidence in the technology.

In the Game Still

Baughman claims that, despite obstacles, China is either on par with or ahead of the United States in AI development. The CCP’s national aim to make Chinese society more efficient and competitive via widespread digital transformation is known as “Digital China,” and AI is a fundamental component of this strategy.

Advancing “these emerging technologies” is “inextricably linked” in China, he added, to “rejuvenating China and with maintaining the legitimacy of the party.” “This is an issue of the highest priority.”

There is potential for at least one military use of generative AI right now. The PLA authors explore the use of artificial intelligence (AI) in the cognitive domain to “destroy the image of the government, change the standpoint of the people, divide society, and overthrow the regime” by flooding the public with false news, videos, and other information that plays on people’s worries and suspicions.

In Baughman’s words, “that is not something years in the future, it is something they can do today,” adding, “and the scale that they could do it at is just unreal.”

As technology progresses quickly, so will the nature of the risks that we face.

“Everything is going to be moving faster and evolving faster,” he said. It is imperative that the United States be ready for these seismic shifts. Take a peek at how far generative AI has come in the last half a year. It will have far-reaching effects, affecting everything from the military to the economy.